17 April 2021

Allocating people to teams is a common task in both sport and business. Often we want the teams to be as balanced as possible. But making the allocation can be a difficult problem, especially if there are many people or we need to form several teams.

In this article we build and analyze a model that allocates 32 people to four teams, with the objective of each team having an aggregate rating that is as balanced as possible. The task is complicated by having three types of position in the teams, with a diversity of ratings across the available people.

The model is built in Excel and solved using either Solver or OpenSolver.

Download the model

The model is available on GitHub.

Situation

We have 32 analysts that we need to form into four teams of eight. Each team already has a manager appointed.

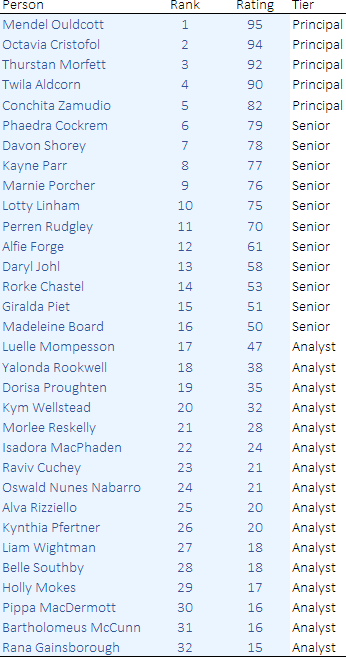

Each analyst has been assessed, resulting in a rating from 0 to 100, as shown in Figure 1. Based on their rating, the analysts are divided into three tiers:

- Principal, with rating from 80 to 100. There are 5 Principals.

- Senior, with rating from 50 to 79. There are 11 Seniors.

- Analyst, with rating from 0 to 49. There are 16 Analysts.

Our objective is to make the teams as evenly balanced as possible. Note that this is a somewhat ambiguous objective, which we'll need to interpret to build a model.

Conveniently, our list of potential team members has 16 Analysts, which fits neatly with our need for 4 teams. Therefore, we would like there to be 4 Analysts per team.

However, the number of Seniors and Principals is not so neat. We will need to allow the teams to have different numbers of Seniors and Principals. This will involve making a trade-off between the number of people at each tier and their ratings (which are, by design, higher for Principals than for Seniors).

Before proceeding, have a go at manually forming four teams. How close can you make their total ratings? How would you know if your solution could be improved?

Model design

Defining what "balanced" means

We want the teams to be balanced. We interpret this goal to mean that the total team member ratings for each team should be as similar as possible. Summing the individual ratings across all 32 people, the total is 1,567. Therefore, we expect the total ratings for each team to be around 1,567 / 4 = 391.75.

But the essence of this situation is that we don't know what the total ratings should be for each team. For example, picking teams of 1 Principal, 3 Seniors, and 4 Analysts can produce team ratings from 300 to 481. That's a wide range – much wider than we want.

Since we don't know what the total team ratings should be, we'll specify upper and lower bounds as variables and let the model decide how to minimize the difference between those bounds. That is a common technique for this type of situation.

Implementation

A model does what it is told, no more and no less

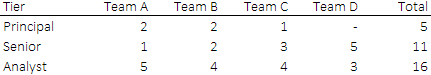

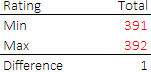

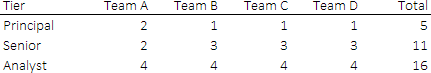

If we solve the model as designed above, then we get the optimal solution shown in Figure 2. The objective function has a value of 1, resulting from the total team ratings being either 391 or 392, which is excellent. But the composition of the teams is terrible. That is:

- Team D does not have a Principal, while Team A and Team B each have 2 Principals.

- Team D has 5 Seniors, while Team A has only 1.

- Even though we have 16 Analysts, the model didn't distribute them evenly across the teams (like we wanted it to).

The problem is that we have in mind a vision for what a good team composition looks like, but we didn't tell the model. Instead, we just told the model to minimize the difference between total team ratings, which it did very well.

In other words, the model did exactly what we told it to do. The fact that the model didn't fulfil our unstated requirements is a failure of our model design, rather than a failure of the model itself.

This situation often happens when developing a model. Unstated requirements only emerge when we see a solution that we don't like. Given this new information, we need to clarify – and perhaps expand – our requirements and then try again.

Improving our definition of a balanced team

So, what can we do? We want to form balanced teams, but what exactly does that mean? Our objective function produces balanced team ratings in aggregate, but it says nothing about team composition. It turns out that we also want each team to have a similar number of Principals, Seniors, and Analysts.

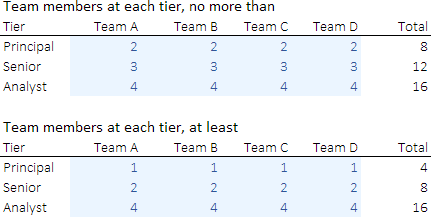

We already know that we want to have exactly 4 Analysts per team. But we don't know how many Seniors and Principals each team should have. Therefore, we specify upper and lower bounds, as shown in Figure 3.

That is, each team is allowed to have 1 or 2 Principals, and 2 or 3 Seniors. Note that we need to set these bounds carefully, to avoid making the problem infeasible. For example, we have 5 Principals. Given that we have 4 teams, requiring exactly 1 Principal per team would be infeasible. Similarly, we cannot require each team to have 2 Principals.

By making the Analyst upper and lower bounds the same, we effectively fix the number of Analysts at 4 per team – though we allow flexibility for different bounds, if we wanted to explore other team compositions.

With a clearer definition of what "balanced" means, we can now describe the final model.

Solver model

Objective function

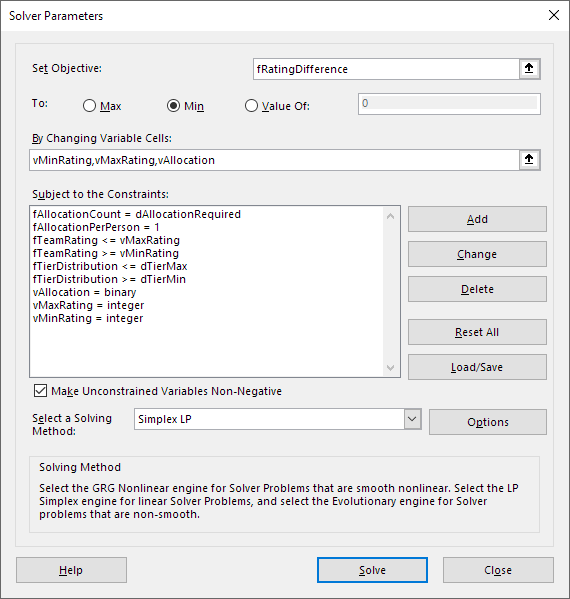

The Solver model is shown in Figure 4. Our objective is to minimize the difference between the total ratings for all teams.

Variables

The model has three sets of variables:

vMinRating. A single number representing the minimum total rating for a team.vMaxRating. A single number representing the maximum total rating for a team.vAllocation. Binary variables that indicate which team each person is allocated to.

The rating bound variables are specified as integers, to guide the solver, though this isn't necessary.

Constraints

The constraints are:

fAllocationCount = dAllocationRequired. This requires each team to have 8 members.fAllocationPerPerson = 1. Each person can be allocated to only one team.fTeamRating <= vMaxRating. The total rating for each team must be less than or equal to the upper bound on the rating.fTeamRating >= vMinRating. The total rating for each team must be greater than or equal to the lower bound on the rating.fTierDistribution <= dTierMax. The number of people in each team must be less than or equal to the team composition upper bound for each tier (c.f. the top part of Figure 3).fTierDistribution >= dTierMin. The number of people in each team must be greater than or equal to the team composition lower bound for each tier (c.f. the bottom part of Figure 3).

A key part of this model's design in that the variables vMinRating and vMaxRating appear as both bounds on the team selection and in the objective function. It is the interaction between these variables in the bounds and the objective function that achieves our desired outcome of making the teams' total ratings as balanced as possible.

Solution method

All the model's relationships are linear, along with some binary and integer variables, so we can use the Simplex method.

This model is sufficiently small that either Solver or OpenSolver can be used, though OpenSolver is much faster for this model.

Analysis

Optimal solution

The optimal vMinRating and vMaxRating bounds are shown in Figure 5. The objective function, which is the difference between those bounds, equals 1. That is, the teams are almost perfectly balanced in aggregate, with total ratings of either 391 or 392. This aligns well with our expectation that the total ratings for each team would be around 1,567 / 4 = 391.75. The range of only 1 is surprisingly small, and quite pleasing, given the diversity of ratings for the individual team members.

Finding a similar solution manually isn't too difficult, in this example. Though if we scale up the situation, to have more people and/or more teams, then finding a good solution manually becomes increasingly difficult.

The mix of tiers in each team is shown in Figure 6. As expected, each team as 4 Analysts, 2 or 3 Seniors, and 1 or 2 Principals. The team that has 2 Principals (Team A) has only 2 Seniors, which makes sense in terms of the ratings allocation and in terms of how we might want the people allocated. This version is much better than our first attempt shown in Figure 2.

This solution fulfils our desire to make balanced teams – in terms of total team ratings and team composition – so this is an excellent solution.

Modelling is often an iterative process

Our first attempt at building the model produced an optimal solution that we didn't like. This is quite a common occurrence when developing optimization models.

In this case, the issue was that our definition of "balanced" was ambiguous, so we used an interpretation that was too narrow given our unstated requirements. After expanding our definition to include team tier composition, we achieved a solution that is much more satisfactory.

Even better, it turns out that we can achieve that balance without compromising the total team rating objective (because there are multiple alternative optima), which is a bonus. In other cases, it might be necessary to make a trade-off between our multiple requirements.

Conclusion

This model is a simple example of allocating people to teams, where we want the teams to be as balanced as possible.

Our model also demonstrates an important aspect of optimization modelling: the stated requirements often don't tell the whole story. We realize that something else is needed only when we see a solution that we don't like.

More generally, models are very pedantic – a model will do what we tell it to do, even if that isn't really what we mean. Clarifying requirements can be a time-consuming iterative process, cycling through changes to the model and examining solutions, until we eventually reach a solution that meets our needs. Iterative design is a normal, though seldom appreciated, part of modelling.

If you would like to know more about this model, or you want help with your own models, then please contact us.